Emerging technology is changing the face of robotics, moving away from traditional pipelines toward integrated approaches that merge vision, language, and action in a unified system. Companies are now combining these domains to enable robots to interpret complex instructions and adapt their actions accordingly. Brands like NVIDIA, Figure AI, and Google DeepMind have introduced new models that prioritize real-time performance and generalization. This evolution may push robots to operate more efficiently in unpredictable environments and respond to tasks previously considered too intricate for automation. Shifting from separate modules to single-model integration also poses important questions about deployment, hardware constraints, and safety for commercial actors working to incorporate these advances. These advances occur as robotics find increased prominence across sectors that demand reliable, intelligent autonomy.

Early versions of vision-language-action (VLA) systems focused heavily on simulation or limited real-world trials. Announcements around Figure AI, NVIDIA’s GR00T N1, and Google DeepMind’s models highlight more mature deployment and improved robustness compared to prior attempts. Recent demonstrations show lower latency, broader generalization, and tighter integration with physical hardware than earlier research models could manage. While progress is clear, persistent issues with robustness, efficiency, and benchmarking remain at the forefront, echoing concerns and hopes discussed in previous industry reports.

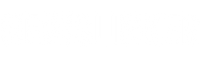

How Do Vision-Language-Action Models Work?

These models take high-dimensional sensory inputs, such as images and text commands, and directly translate them into motor actions for robots. Unlike conventional robot systems, which separate perception, planning, and control tasks, VLA models approach the challenge in an end-to-end manner. This integration supports flexibility, as robots using these models can perform tasks in less-structured settings by leveraging continuous learning across all involved domains.

What Are the Main Developments from Leading Brands?

Figure AI’s Helix, NVIDIA’s GR00T N1, and Google DeepMind’s RT-2 each offer distinctive approaches to unified robot control. Helix leverages a high-level reasoning backbone and a separate, real-time controller for dexterous manipulation. GR00T N1, developed by NVIDIA, emphasizes pretraining on a diverse set of datasets to facilitate adaptability across multiple platforms and task types. According to NVIDIA,

“With GR00T N1, we want to bring a general-purpose, vision-language-action model to real-world robots, offering broad capabilities for future applications.”

Meanwhile, RT-2 builds on multimodal backbones and is optimized for scalability, offline operation, and generalization to unfamiliar situations.

What Practical Challenges Do Robotics Teams Face?

Execution in the physical world introduces new hurdles, including issues with noisy sensors, variable environments, and hardware resource limitations. Large VLA models can strain computational resources, complicating deployments on mobile robots. Efforts to create smaller, efficient models aim to offset these challenges. There are also ongoing discussions within the industry about standardized benchmarking, as fair comparison across both simulation and real setups remains elusive. Figure AI comments,

“Our research with Helix shows that a dual-system VLA can bridge reasoning and control, but bringing these models to deployment requires cautious validation and robust engineering.”

The spread of open frameworks and communal datasets has led to broader collaboration, enabling more institutions and companies to experiment with and implement VLAs. Industry trends suggest the next generation of models could incorporate diffusion-based architectures and more advanced planning, further bridging the gap between AI reasoning and practical robotic control.

Adopting vision-language-action models presents both opportunity and complexity for robotics, especially regarding scalability, safety, and transparency. As teams navigate hardware limitations and rigorous testing requirements, understanding the strengths and limitations of each approach will be vital to successful commercial applications. Readers interested in leveraging such technologies should track advancements in on-device model efficiency, real-world validation, and the development of shared evaluation standards. These aspects may ultimately determine which robotics innovations achieve durable, trustworthy adoption across industries.